1. The Problem with Prompt-Based AI

Large language models have dazzled us with their conversational fluency — but in the enterprise, words alone don't move money.

Ask any banking technologist: the moment you try to deploy an LLM into a real workflow — credit underwriting, fraud review, or wealth advisory — you hit a wall.

That wall is context.

Current LLMs operate like brilliant interns with amnesia.

Each prompt is a fresh conversation. They can't securely remember what they saw yesterday, access what sits inside the bank's systems, or verify that the data they're reasoning on is even real-time.

So we wrap them in endless layers of glue code, retrieval pipelines, and API adapters — temporary scaffolding that never quite scales.

We've turned model integration into artisanal craftsmanship instead of enterprise engineering.

2. Enter MCP — The Protocol for Context

The Model Context Protocol (MCP) proposes something deceptively simple: a standard way for AI models to discover, request, and use context — not just data.

Where HTTP standardized how computers exchange hyper-text, MCP standardizes how models exchange meaningful context: tools, data sources, memories, and permissions.

Instead of hard-coding every integration, MCP defines how a model can ask a compliant system:

"What tools can I use?"

"What data am I allowed to see?"

"How do I call this function safely?"

MCP turns ad-hoc prompt engineering into structured protocol negotiation — transforming the model from a text predictor into a context-aware collaborator.

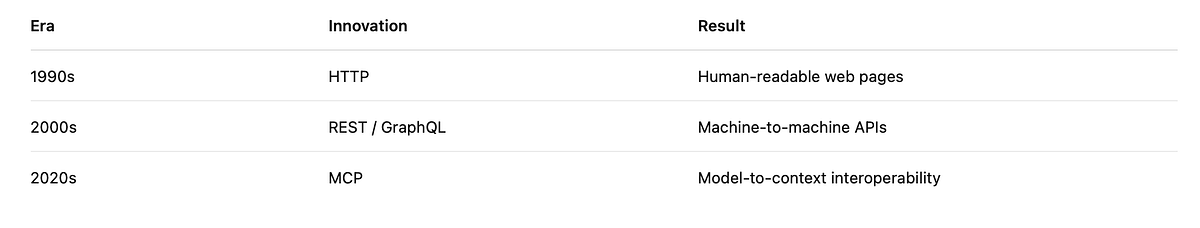

3. Analogy: MCP is to AI what HTTP was to the Web

In the early 1990s, networks were a patchwork of proprietary file-transfer systems. The Web only took off when HTTP gave every server and browser a common language for requests and responses.

MCP does the same for AI.

By giving LLMs a way to negotiate context — securely, declaratively, and reproducibly — MCP paves the way for an Internet of Models.

4. From Data to Context

Traditional APIs return data. MCP nodes expose context.

- Dataset: "Here are 1,000 transactions."

- Context: "Here's a customer's 90-day spending pattern, governed by policy XYZ, callable only by audited agents."

Context carries semantics, provenance, and policy — everything an AI system needs to act responsibly inside a regulated institution.

5. Why Banks Care

Financial institutions run on boundaries — risk, data, compliance.

AI, by nature, thrives on permeability: its power lies in synthesizing insights across silos.

That tension defines today's context gap.

Siloed data — core banking, CRM, payments, and compliance systems rarely share schema.

Opaque reasoning — prompt-only models can't explain which data informed an answer.

Governance risk — each manual integration is another attack surface for leakage or drift.

MCP resolves that tension by making context-sharing a governed, first-class operation.

Through MCP, a bank can:

- Expose selected capabilities (e.g., "Get FX Rates", "Check Credit Policy") as context nodes.

- Describe input/output schemas, authentication, and audit metadata.

- Let approved models discover and invoke those nodes dynamically — without new glue code.

Every call is logged, typed, and traceable.

Compliance meets composability.

6. A Simple Illustration: Live FX Rates via MCP

Imagine a conversational copilot on a treasury desk.

Without MCP:

User: "What's the EUR/USD spot rate right now?"

Model: Attempts to recall training data or hits an external API (if allowed).

Governance alarm bells ring.

With MCP:

Model → MCP Node: "Call getFXRate(base=EUR, quote=USD) under policy 'treasury-read-only'."

MCP Node → Model: "Response: 1.0872 (Reuters feed @ 2025–10–28 20:10 UTC)."

Everything about that interaction — source, timestamp, authorization — travels with the context.

No hard-coding, no shadow APIs, and an auditable trail for risk officers.

7. Under the Hood — Conceptual Architecture

Think of MCP as an overlay between models and systems.

8. Interoperability and Composability

Because MCP nodes describe schemas and policies in standard formats, they become LEGO blocks.

Multiple nodes can be composed at runtime by an AI agent:

"To evaluate a loan, pull credit score (node A), income verification (node B), and risk policy (node C)."

This composability turns individual AI models into contextual ecosystems — the foundation for multi-agent architectures we'll explore later.

9. Security and Auditability by Design

Financial AI cannot rely on trust alone. MCP enforces three native principles:

- Least Privilege — each node declares who can call what.

- Contextual Logging — every interaction carries metadata.

- Deterministic Access — schemas eliminate prompt ambiguity that causes leakage.

MCP bakes in the controls regulators wish LLMs had from day one.

10. Why This Matters Now

OpenAI, Anthropic, and others are converging on MCP-like frameworks as the next layer of AI infrastructure.

For banks experimenting with copilots and assistants, this is the moment to shift from projects to platforms.

MCP provides the governance and standardization that let LLMs graduate from sandbox pilots to production-grade financial systems.

Just as HTTP enabled the Internet economy, MCP could underpin the Financial AI Internet — where every model speaks context natively.

11. Real-World Momentum — ContextForge MCP Gateway

A new wave of implementations is making this vision tangible.

ContextForge MCP Gateway, for example, is emerging as an open, enterprise-ready bridge between LLMs and business systems.

It packages registry, authentication, and audit layers so developers can expose existing APIs as compliant context nodes in minutes — not months.

For banks exploring MCP, ContextForge demonstrates that the protocol is no longer theoretical; it's the connective tissue already powering pilot deployments in payments, credit, and document intelligence.

12. The Language of Context

MCP encodes a new grammar for AI interactions — moving from unstructured prompts to structured context protocols.

13. From Prompts to Protocols

The trajectory of AI integration is clear: from manual prompt engineering to automated protocol-based context sharing.

14. The Road Ahead

In this series, we'll move from concept to construction:

- Why banks urgently need context → Article 2

- How to build an MCP node → Article 3

- Where it creates value in risk, compliance & CX → Article 4

- How it ties into Agents & ModelOps → Article 5

- What the future financial AI fabric looks like → Article 6

✳️ Closing Thought

When the Web began, the browser was the revolutionary interface.

Today, the LLM is.

But without HTTP, the Web would have stayed a toy for researchers.

Without MCP, enterprise AI will remain stuck in prompt experiments.

Model Context Protocol is the missing language that lets AI systems finally speak enterprise.

🔜 Next in the Series

👉 "Context is the New Data: Why Banks Need MCP."

We'll examine how context fragmentation costs millions in inefficiencies — and how MCP turns that chaos into a governed data advantage.